When Algorithms Won An Ancient Human Game And Became “AI”

A truly original move, a word adopted into our vocabulary, and another way of thinking about ourselves

“I am confident about the match. I believe human intuition is too advanced for AI to have caught up. I’m going to do my best to protect human intelligence.”

— Lee Sodol, Go Grandmaster (9th Dan) prior to match with AlphaGo, AlphaGo (Documentary)

It’s hard to know when a new idea truly engrains itself into a society, let alone to know the exact starting point or person. Famed music producer Rick Rubin believes ideas are given to us from an external source (the universe.)

In his book The Creative Act: A Way of Being, he says those with “sensitive antennae” can feel it and pull it out of the ether. If one doesn’t catch it, the idea will wait until its signal is picked up by a receiver sensitive enough.

That’s why ideas can seemingly appear many places at once. It’s a bit hokey and cosmic, but he may have a point. For instance, the ancient two-player game “Go,” according to some, is four thousand years old, while others date it at about half that age.

The Go aficionado John Fairbairn notes the game (“Weiqi” in Pinyin Chinese) was mentioned in the Analects of Confucius and the text Zuo Zhuan, describing events in the fifth century BC. Although he believes it may have derived from “divining boards” for consulting the heavens long before this.

But no one knows for certain. It’s thought to have appeared in Japan from Korea about fifteen hundred years ago, and Go’s modern name comes from the Japanese “Igo.” Often words can help us narrow down origins.

Personally, I remember a time when few people said the term “internet,” but now it’s synonymously spread throughout the population from young to old. You see something similar now with the term “AI.” If you don’t believe me, try an experiment.

Ask a random person you meet what they think about AI, and I’m willing to bet they’ll be able to engage you in a conversation. They’ll have heard of it, might be scared or awed by it, and will likely have an opinion of it.

AI — both the term and tech — have reached a penetration point in our society, but its tipping point to adoption is less murky than Go. In fact, the two are forever linked. Computers officially became “AI” once they mastered this ancient game in March of 2016.

The Impenetrable Wall Of Go

While a computer beat a world chess champion for the first time in 1997, the ancient game of Go was much more difficult. In the documentary AlphaGo, director Greg Kohs tells the story of why Go was thought of as the “holy grail” for computer intelligence.

In the film, Frank Lantz, Director of NYU’s Game Center describes Go as “putting your hand on the third rail of the universe,” because it requires so much focus, you’re always being pushed to the limit of your capacity.

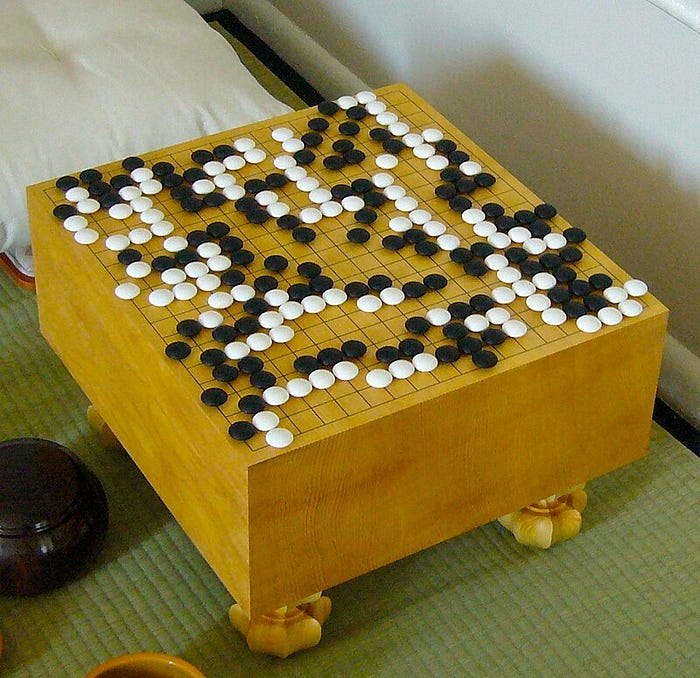

The goal is simple: control the most space on the board with a linked set of white or black stones. But Lantz points out you’re given almost unlimited choices how to do this, and each choice forms a ripple affecting others. In a way, it’s like the complexity of a life.

Demis Hassabis Co-Founder & CEO of Google’s DeepMind says in Chess the number of possible moves per position is twenty, but in Go it’s two hundred. Plus, the number of possible “configurations on the board is more than the number of atoms in the universe.”

He claims that if you set all the computers in the world into the task of calculating the possible variations, it would take a million years. Moreover, decisions are so complex, often Go players move by “intuition.” Hassabis and DeepMind set about building an algorithm called AlphaGo to mimic this.

After much work, they set up a match between the European Go Champion Fan Hui to be battled out on film. In pre-interviews, he was totally confident due to Go’s complexity. But the program smashed Hui five games to none.

After each loss, Hui was so stunned, he had to get up and leave the offices to go for a walk. Losing at Go to a computer program didn’t logically make sense. But AlphaGo was different.

How A Program Can Play And Win Go

“I’m not happy to lose the game, but I will be happy for play in the history.”

— Fan Hui, AlphaGo (Documentary)

According to Cade Metz at Wired, AlphaGo was a multi-staged program. The first level involved deep neural networks, which mimic the neurons in a human brain, enabling it to learn like we do. Therefore, feed it endless pictures of cats, and it can pick one out.

Metz says at the time, this tech could be used to pick out faces, follow voice commands, carry on limited conversations, and learn the fundamentals of playing Go by analyzing thirty million moves of high-ranking players.

The Large Language Models we’re familiar with today, like ChatGPT, use this technique; essentially, they’re trained and make predictions. But AlphaGo had another level called reinforcement learning.

This level requires no pre-selected data to learn from, the program generates its own. In other words, different versions of AlphaGo played each other in millions of simulated matches, learning which tactics could generate the most territory. Then, this data was fed into a final neural net.

It created a Go-playing program like no other. But DeepMind had one more challenge to prove the technology — defeat the world Go Champion Lee Sodol.

“Move 37,” A Truly Original Move

While beating Hui was an achievement, Sodol was something entirely different. Go experts have rankings — like martial arts hierarchy — where Hui was a “2nd dan,” Sodol was a “9th dan.” The DeepMind crew became so concerned, they even hired Hui to spar with AlphaGo regularly.

While non players may not be familiar, Sodol was like the Tiger Woods of Korea. He became a professional player at twelve, and won forty-eight titles in his career. The documentary notes he’d dominated the game for a decade and won eighteen world championships.

Within China, Korea, and Japan, Go wasn’t just a game, it was an identifier of elite status. It’s considered one of the four “noble things,” along with music, poetry, and painting. When the match was signed to take place within Seoul, South Korea on March 2016, a local media sensation began.

Reporters crammed into the Four Seasons Hotel to watch the match, and AlphaGo stunned onlookers by aggressively attacking and winning the first game. The shock deepened as the program took match two. Then, something truly bewildering happened in match three.

Frustration forced Sodol to leave the table after moving a stone. When gone, the computer made its move, which a commentator first claimed was a mistake. However, other experts noted it was something they’d never seen before — a “truly original move,” in an ancient game.

It became infamously known as “move 37,” and when Sodol came back, he sat and stared at the board in confusion for a solid eight minutes, then fell apart. In the end, AlphaGo took four out of five games, crushing the champion. Something else happened too.

As Sodol went down in flames, watchers became uneasy, and even the team at DeepMind mentioned feeling sadness. Namely, like humanity had lost their cherished gift of originality and uniqueness to a dead thing of silicon and plastic.

Even “9th Dan” Lee Sodol couldn’t “protect human intelligence,” and we could all be replaced. He retired from the game not long after.

In a way, it was like a catharsis for our entire species, recorded on camera, and a moment for future historians. Suddenly, computers weren’t dumb machines, and that term “artificial intelligence” took on a new meaning in our culture. Although Rick Rubin sees it differently.

Another Way Of Thinking About Humanity’s “Loss”

“We tend to believe the more we know, the more clearly we can see the possibilities available. This is not the case. The impossible only becomes accessible when experience has not taught us limits.”

— Rick Rubin, The Creative Act: A Way of Being

The legendary music producer believes AlphaGo won because it knew less than Lee Sodol. While originally fed data, the algorithm learned to play Go on its own, without thousands of years of preconceived notions. Rubin says this is the state of “beginner’s mind.”

This condition is something artists famously search for to do something truly original. The machine just gave us a reminder. In fact, AlphaGo even forced Sodol into becoming stronger to achieve his one victory.

In Game four, Metz says the human performed a move none watching could see coming, which became known as “God’s Touch.” It created a “wedge” where none was available and sent AlphaGo into a death spiral of collapse.

While AI could replace us, this doesn’t have to be the case. It’s not a binary choice. Wolves turned into dogs, becoming our best friend and partners for millennia. Electricity and fire were once dangerous, now they’re tools.

We don’t have to “lose” to AI. When used properly, it can be another thing that makes us better. Specifically, it could be that one tool which thinks differently, continually reminding us of the power of a beginner’s mind.

Just like Go has been a self-development device since ancient times, perhaps AI can be the same in the right circumstances.

-Originally posted on Medium 1/27/24